Understanding Procedural Safety Barriers Part 2: Checklist

By Mr. Sean Bordenave, HQ AMC CRM/TEM Program Manager

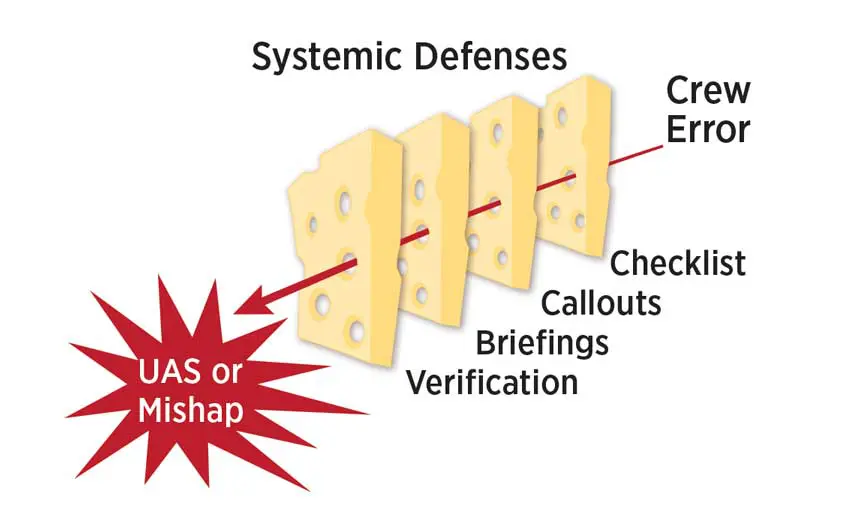

Have you ever stopped and wondered about the reasoning behind some of our procedures? Those procedures are there to ensure we properly operate the aircraft. They become our safety barriers. There are many procedures that we utilize while operating an aircraft, but briefings, checklists, cross-verification, and callouts represent some important procedural safety barriers.

As highlighted by Dr. James Reason’s “Swiss Cheese†Model, these procedures provide an opportunity to detect and correct errors that we might make while flying. If we omit those procedures or we do not properly utilize them, then they could become “holes†in the Swiss cheese that allow an error chain to continue. In other words, those procedural barriers become procedural errors in the error chain.

The one procedural barrier that we are probably most familiar with is the checklist. It was one of the first procedural barriers implemented to improve flight safety. As a result of a 1935 B-17 accident in which the pilots failed to remove the flight control lock, the U.S. Army Air Corps directed the implementation of the checklist.

More importantly, the implementation of checklist procedures represented a standardized approach to operating an aircraft:

In the 1930s, the quality of aircrew performance was improved by a simple, effective form of standardization: the checklist. Like a recipe, a checklist consisted of written, step-by-step procedures that ensured Airmen performed their duties in the correct manner and sequence. Even experienced pilots benefited from this tool.

Where did checklists come from? https://www.acc.af.mil/News/Article-Display/Article/200135/where-did-checklists-come-from/

CHECKLIST—A STANDARDIZED APPROACH

Before we get into specifics regarding checklist errors and their impact, understanding the different underlying checklist philosophies and designs are critical to how we implement this procedural barrier.

“READ-DO†CHECKLIST PHILOSOPHY VS. “FLOW & CHECK†CHECKLIST PHILOSOPHY

Checklist philosophy and design are best understood when we look at Mobility Air Forces aircraft from the perspective of a legacy Major Weapon System (MWS) versus a newer MWS. This perspective is valuable as checklist philosophy and crew complement have evolved over time. We can see those differences depending on the age of the MWS. Legacy aircraft, such as the KC-135, C-5, and Legacy C-130, procedures applied a “read-do†checklist style, which meant “read†a checklist step, “do†a checklist step; thus checklist verification was applied as the checklist step was performed. Additionally, every step was verified by a checklist. For those legacy systems, the checklist protocols have evolved some, but the underlying philosophy still remains, most notably, verifying every procedural step. With newer aircraft, such as the KC-10 and the C-130J, the procedures show the evolution to scan/flow and check philosophy. In this checklist philosphy, the crew performs the procedural steps without direct reference to the checklist (unless desired) in a geographical panel flow and then afterward verifies only critical procedural items using a checklist.

Despite the differences in checklist types, the intent of all checklists is to ensure (verify) that critical aircraft systems and flight controls are properly set for the given phase of flight. Unfortunately, all checklists are vulnerable to complacency, distraction, interruption, and rushing, because a human is utilizing the checklist for verification.

VULNERABILITIES TO OUR PROCEDURAL DEFENSES

Unfortunately, our procedural defenses are susceptible when events disrupt our normal habit patterns or when our own mental awareness or state of mind impedes our teamwork or response.

As you will see in the following Aviation Safety Action Program (ASAP) examples, threats—such as marginal weather and aircraft malfunctions—play a role in distracting or interrupting the crew’s habit patterns or procedures, which will lead to crew error. In these events, Crew Resource Management (CRM) becomes our next layer of defense.

Checklists are universal in their intent—verification that a procedure is completed correctly.

ASAP CHECKLIST ERROR EXAMPLES

As we go through these KC-135 ASAPs, which focus on checklist errors, keep in mind that although the examples might be KC-135-specific, the lessons learned apply to all MWSs. Checklists are universal in their intent—to provide verification that a procedure is completed correctly.

ASAP #20687 SUMMARY [KC-135 AIRCRAFT]

Incident aircraft was a KC135R. The morning of [the] incident was high temperature, high humidity, and a tail swap was involved which were potential contributing factors. All checklists were thought to be completed normally and the aircraft was cleared for takeoff. PF [Pilot Flying] was left seat and PM [Pilot Monitoring] was right seat. The PF called for gear up. NLG [nose landing gear] did not retract, MLG [main landing gear] retracted normally. The gear handle was left in the up position and the PM was instructed to set up a hold with ATC [air traffic control] at 10,000 feet to allow the use of autopilot and start looking for the appropriate checklist. PM set up the hold and confirmed with the PF. While still climbing out to 10,000 feet, PM found the checklist Nose Gear Extended, Both Main Gear Retracted, and neither the PM or PF realized the checklist was a subset of Preparation for Gear Up Landing. Referencing the Nose Gear Extended, Both Main Gear Retracted, the crew read accomplish steps 1 through 12 of the preparation for gear up landing and decided that checklist was not appropriate. PF told PM to keep looking for something more appropriate. Both PM and PF failed to find Retraction of Landing Gear with Nose Gear Ground Downlock & Release Handle Installed located under Non-Critical Procedures on the next page of the Table of Contents. After the crew leveled off at 10,000 feet and turned on the autopilot, the PF asked if the NLG downlock was installed. Crew then realized that the NLG downlock handle was still installed, a missed checklist item. PF directed gear down and removed the downlock, and then the gear was retracted normally. Aircraft flew to destination without further incident. Full NLG inspection was completed with no defects noted.

While the submitter focused on the crew actions after the nose gear failed to retract, our analysis will focus on the NLG downlock missed by the Boom Operator (BO) during preflight duties. Using Threat/Error Management (TEM), the submitter describes the missed action (removing the NLG downlock) and the resultant Undesired Aircraft State (UAS). The application of TEM then helps us think about how we would “trap†or detect this error before it becomes a UAS. How we detect or trap an error is our procedural defenses (callouts, briefings, cross-verification, etc.). In this case, the procedural defense is the checklist. During preflight duties, Technical Order (TO) 1C-135(K)R(II)-1 directs the BO to remove, stow, and check the NLG downlock three times in the procedures/checklist:

BO PREFLIGHT

# *6. Nose Gear Ground Downlock and Release Handle—Remove and stow

Failure to remove the nose gear ground downlock and release handle prior to retracting the landing gear may cause damage to the downlock and release mechanism which could render the nose gear emergency extension system inoperative. An Air Force Technical Order (AFTO) Form 781 entry is mandatory.

BO MISCELLANEOUS DUTIES

Sequence may be varied and be accomplished any time prior to engine start.

# *2. Safety Locks—Stow

Ensure the nose gear ground downlock and release handle, nose gear external downlock, nose gear steering lockout pin, two main gear external downlocks, two main landing gear door external downlocks, and the nose gear inflight downlock pin are stowed prior to engine start.

BEFORE TAKEOFF

6. Takeoff Report—Complete (BO, N [navigator], CP [copilot])

BO reports, “Safety locks stowed, boom ready for takeoff.†At this time the BO will monitor ATC frequency.

These procedures represent three opportunities to remove, stow, and verify the NLG downlock. As the submitter mentioned in the ASAP, high temperature, high humidity, and a tail swap most likely contributed to the missed NLG downlock. Tail swaps (threat), which can lead to mission pressure and rushing, are scenarios where crews will look for procedural short-cuts, or quickly “gloss over†the checklist items to get back on the timeline. Unfortunately, a missed checklist item represents a potential UAS, which should be avoided. Remember, slow is smooth and smooth is fast.

Note: The number of times T.O. 1C-135(K)R(II)-1 directs the BO to verify that the NLG downlock is removed and stowed does not indicate that the procedure is that much safer or more effective because it is checked multiple times. The number of times is simply the number of opportunities that the procedures require verification of correct configuration.

ASAP #18533 SUMMARY [KC-135 AIRCRAFT]

After level off at FL290, a climb to FL320 was requested. The PF autopilot did not respond as expected. The PF gave multiple commands to autopilot, suspecting issue with autopilot. After verifying differences between PF and PM instrumentation, both pilots analyzed that the PF data was incorrect. Flight controls were switched to the other pilot. The autopilot was turned off and the autopilot flight systems were switched to the other system. The aitooane [altitude alerter] was labeled at FL310. The pitot heat switch was off. After it was turned on, systems returned to normal. Normal operation after [the] pitot switch turned on, and flight continued.

What is your recommended corrective action [Submitter’s comments]?

Checklist discipline and missed verification of switch placement where verified.

As written in the recommended corrective action by the submitter, this ASAP shows another checklist error, in which the pitot heat was not verified as being “on†per the checklist. Verification is an important delineation as a checklist error. A skipped or missed checklist item is different from failing to visually “verify†the setting. In this case, the checklist item may be called (challenge/response) or reviewed, but the crewmember does not actually look and verify the correct setting. This failure makes the checklist an ineffective procedural barrier. Additionally, as shown in the previous T.O. 1C-135(K)R(II)-1 excerpt, this critical checklist does not require verification by both pilots so visual verification is essential by the copilot to detect an incorrect setting.

From a TEM perspective, this ASAP highlights several other factors. First, the ASAP initially demonstrates that the pilots were unaware of the root cause of the issue—the pitot heat being off. Next, the ASAP gives us insight into how long the pilots were unaware that the pitot heat was off. The Pitot Heat, a step on the checklist, is contained in the STARTING ENGINES AND BEFORE TAXI procedure in T.O. 1C-135(K) R(II)-1. Thus, from engine start, taxi, takeoff, and climb to FL290, the pilots were unaware the pitot heat was off. Since all procedural safety barriers were exhausted, the omission of the pitot heat went undetected for approximately 37 minutes (from last engine start until when the crew recognized the issue) before the crew detected the incorrect setting. These two factors indicate that the end result was a UAS. While the crew did a good job troubleshooting the issue, determining the root cause, and recovering from the UAS, the event still represents a reduction in safety margins.

CHECKLIST VULNERABILITIES

Unfortunately, like other procedural safety barriers, checklists are not “pilot proof.†Our human vulnerabilities such as fatigue, complacency, and overconfidence can degrade our ability to effectively utilize a checklist. Likewise, events, such as mission changes and aircraft malfunctions, also degrade checklist effectiveness by disrupting our normal habit patterns or increasing our workload. The next ASAP example will showcase one of these vulnerabilities. Additionally, the situation allows us to examine how CRM would have been an effective resource in managing the situation.

ASAP #19512 SUMMARY [KC-135 AIRCRAFT]

After a low speed abort due to an airspeed mismatch, the decision was made to try the takeoff a second time. The before takeoff and normal checklist/safeties were called and we were cleared for takeoff. The airspeed indicated normal so the takeoff was continued. During rotation we noticed the speed brakes were still extended and the warning horn failed to sound. The speed brakes were promptly stowed and the climb out was continued without issue. Mx [maintenance] was notified of the issue after landing.

What is your recommended corrective action [Submitter’s comments]?

Better guidance on checklist direction after an abort to ensure configuration or an addition to the before takeoff checklist.

While the submitter addresses a critical issue with a safety system (i.e., warning horn failing to work), there are most likely also checklist errors in this sequence, which led to the subsequent takeoff with the speed brake deployed (the UAS).

As highlighted in the previous excerpt, after completing the boldface items, the KC-135 abort procedures direct the pilot to reposition the speed brake to stowed (0 degrees). Furthermore, after completing the bold face procedures, the crew coordination paragraph emphasizes checklist completion. This crew coordination is crucial as the event is disruptive to the normal habit pattern. In this situation where a subsequent takeoff is plausible, the crew must bring the aircraft under control (perform the boldface), coordinate with ATC for the aborted takeoff and exiting the runway, complete the checklist, troubleshoot the malfunction, and then prepare for the subsequent takeoff. These actions may not occur in a smooth and orderly flow; thus, we can see how checklist completion could have been an issue.

Furthermore, the submitter stated that the Before Takeoff Checklist was also completed. The Before Takeoff Checklist does include a speed brake verification step. While the ASAP is unclear why the checklist procedure failed (e.g., incomplete checklist, missed checklist step, or failure to visually verify the speed brake setting), the disruptive nature of an abort takeoff stresses the importance of checklist discipline.

WRAPPING IT UP

The checklist is one of our oldest procedural methods for trapping and correcting errors. Checklists provide a standardized, regimented methodology for crews to operate seamlessly together in a dynamic environment. However, checklist procedures are vulnerable and less effective when crews deviate from standards. Due to repetition and experience level, we quite often become complacent in our checklist usage and discipline. We do things like perform checklists from memory, time ourselves on how fast we can perform a preflight checklist, or deviate from checklist protocols under the “mission accomplishment†banner. Unfortunately, those deviations become accepted practices, which degrade standardization and effectiveness of this vital procedure.

Additionally, we operate in a dynamic environment and sometimes in a high ops tempo. Unusual or demanding events can break down our normal habit patterns. A high ops tempo might impact human factors, such as fatigue, that could erode our mental alertness. Effective CRM should be our next layer of defense to ensure we implement checklist procedures in an effective manner.

Finally, there are aircraft safety systems, which are our final safety nets to detect and correct errors. However, we should not become complacent in our first and second lines of defense simply because there are aircraft safety systems on board.